Most of us are aware that Docker containers and services can connect and other non-Docker workloads. It is called Docker Networking, and as far as this networking is concerned, Docker containers and services need not know that they are deployed on Docker or whether the peers they are connected to are Docker workloads or not. This article will discuss more on the connection between containers and services. Let us begin with the topics that are going to be covered in this article.

- What is Docker Networking?

- And, what is a Container Network Model (CNM)?

- What are various Network Drivers in it?

- Bridge Network Driver

- None Network Driver

- Host Network Driver

- Overlay Network Driver

- Macvlan Network Driver

- Basic Networking with Docker

- docker network ls command

- Inspect a Docker network - docker network inspect

- docker network create

- How to connect the docker container to the network?

- How to use host networking in docker?

- Docker Compose Network

- Updating containers in the Docker Compose network

- Docker Compose Default Networking

What is Docker Networking?

It can be defined as a communication package that allows isolated Docker containers to communicate with one another to perform required actions or tasks.

A Docker Network typically has features or goals shown below:

- Flexibility – It provides flexibility for various applications on different platforms to communicate with each other.

- Cross-Platform – We can use Docker Swarm clusters and use Docker in cross-platform that works across various servers.

- Scalability – Being a fully distributed network, applications can scale and grow individually while also ensuring performance.

- Decentralized – Docker network is decentralized. Hence it enables the applications to be highly available and spread. So in case any container or host is missing from the pool of resources, we can pass over its services to the other resources available or bring in a new resource.

- User – Friendly – Deployment of services is easier.

- Support – Docker offers out-of-the-box support, and its functionality is easy and straightforward, making docker networks easy to use.

We have a model named "Container Network Model (CNM)", which supports all the above features. Let's understand the details of this in the following section:

What is a Container Network Model (CNM)?

Container Network Model (CNM) uses multiple network drivers to provide networking for containers. The CNM standardizes the steps required to provide this networking. CNM stores the network configuration in a distributed key-value store such as a console.

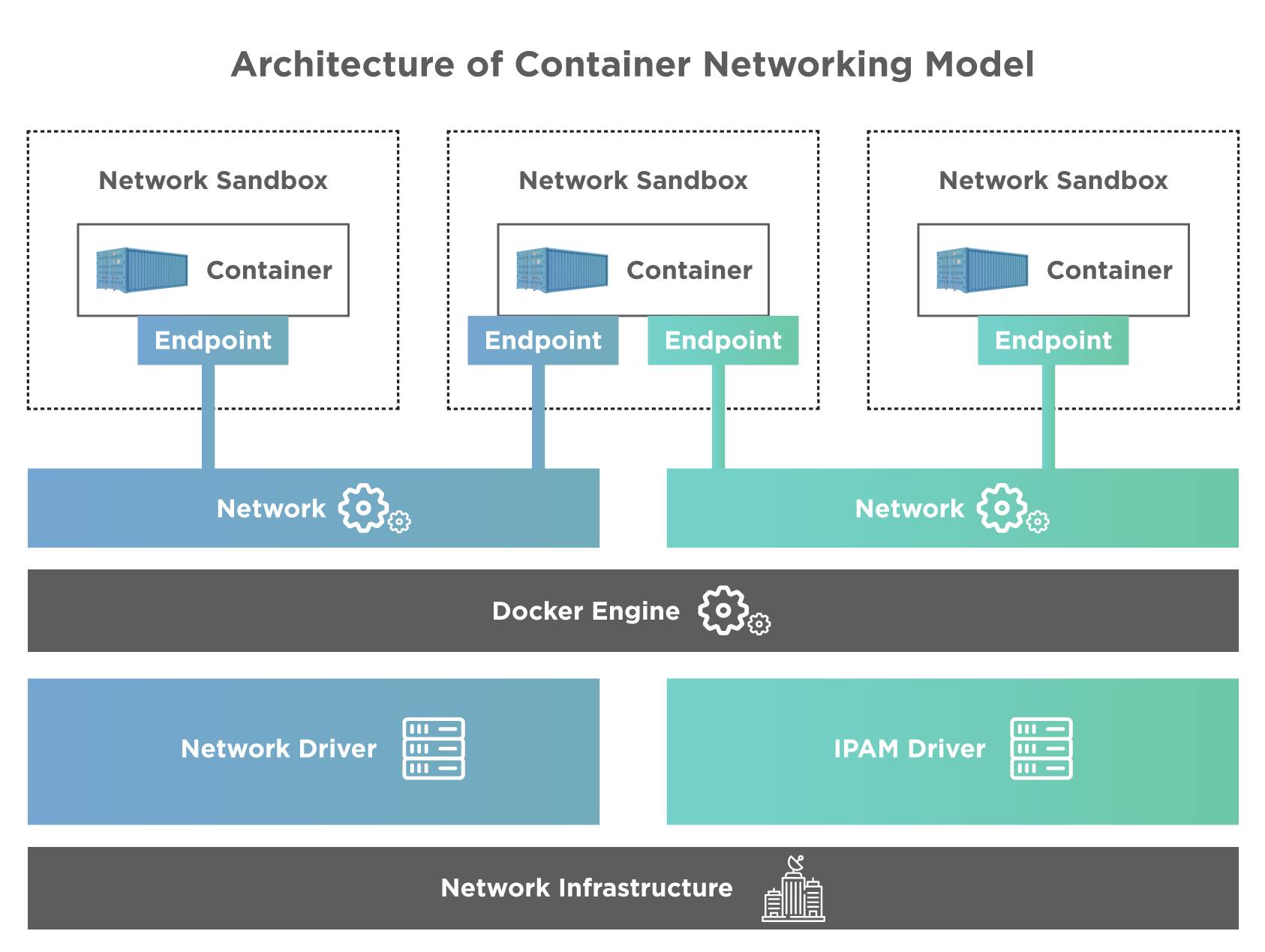

CNM architecture is shown below:

As seen from the above diagram, CNM has interfaces for IPAM and network plugins. The IPAM plugin APIS can create/delete address pools and allocate/deallocate container IP addresses to add or remove containers from the network. Network plugin APIs are used for creating/deleting networks and adding/removing containers from the network.

The above diagram also shows 5 main objects of CNM. Let us discuss these objects one by one.

- Network Controller: The network controller exposes simple APIS for Docker Engine that allocates and manages networks.

- Driver: The driver is responsible for managing the network. Driver owns the network, and there can be multiple drivers that participate in networking to fulfill various deployment and use-case scenarios.

- Network: Network provides connectivity between the endpoints of the same network and isolates them from the rest. The corresponding driver is notified whenever the network is updated or newly created.

- Endpoint: Endpoint provides/ offers the connectivity for services exposed by the one container of the network with other services provided by other containers. An endpoint has a global scope and is a service and not a container.

- Sandbox: A sandbox is created when a request to create an endpoint on a network is put by the user. A sandbox can have more than one endpoint attached to various networks.

With this basic knowledge, we will now move ahead with the types of network drivers supported in it.

What are various Network Drivers in it?

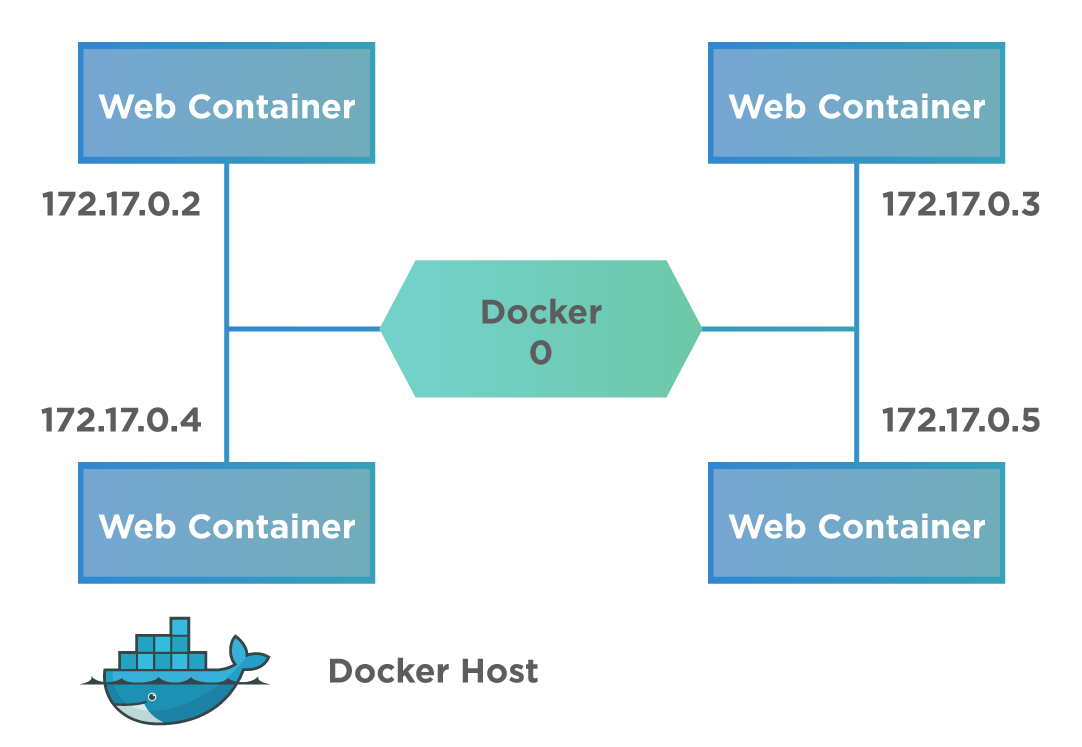

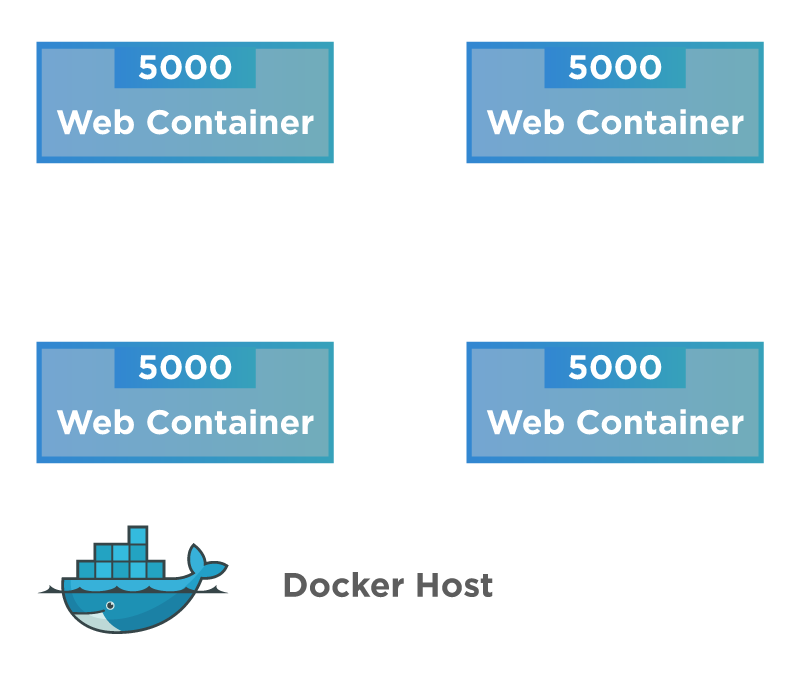

Docker supports various network drivers that make networking easier. Docker creates a private internal network on the host. A typical communication of the containers on a host machine is shown as below:

As a result of this default private network, all containers have an internal IP address and can access each other using this internal IP. It uses various drivers for this communication across the containers. The Bridge is the default network driver in Docker. If we do not explicitly specify the type of network driver, the Bridge network driver is assumed by default. Let's understand the details of various types of Network drivers supported by Docker for inter-container communication:

Bridge Network Driver

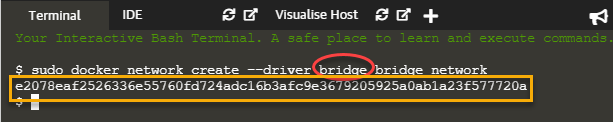

We can create a bridge network using the docker network create command as follows:

docker network create --driver bridge bridge_network

When we execute the above command, it returns an id that identified the bridge network we just created. For example, this is shown in the screenshot below.

As seen from the above screenshot, we specify the type of network (bridge in this case indicated by a red circle) in the command. Then command returns the id for the network created (yellow rectangle), indicating successful execution of the command.

None Network Driver

When the network driver is 'None', the containers are not attached or associated with any network. The containers also do not have access to any other container or external network. The following diagram shows a Docker host with a 'None' network.

So when can we use this type of network? When we want to completely disable the networking stack on a particular container and have a loopback device, we use this 'None' network driver. Note that the 'None' network driver is not available on Swarm devices.

Host Network Driver

The Host network driver removes network isolation between Docker host and docker containers. The host network driver is shown in the below diagram.

From the above diagram, we can see that containers can directly use the host's networking once this isolation is removed. However, we cannot run multiple web containers on the same host or the same port in a host network because now the port is common to all containers in the host network.

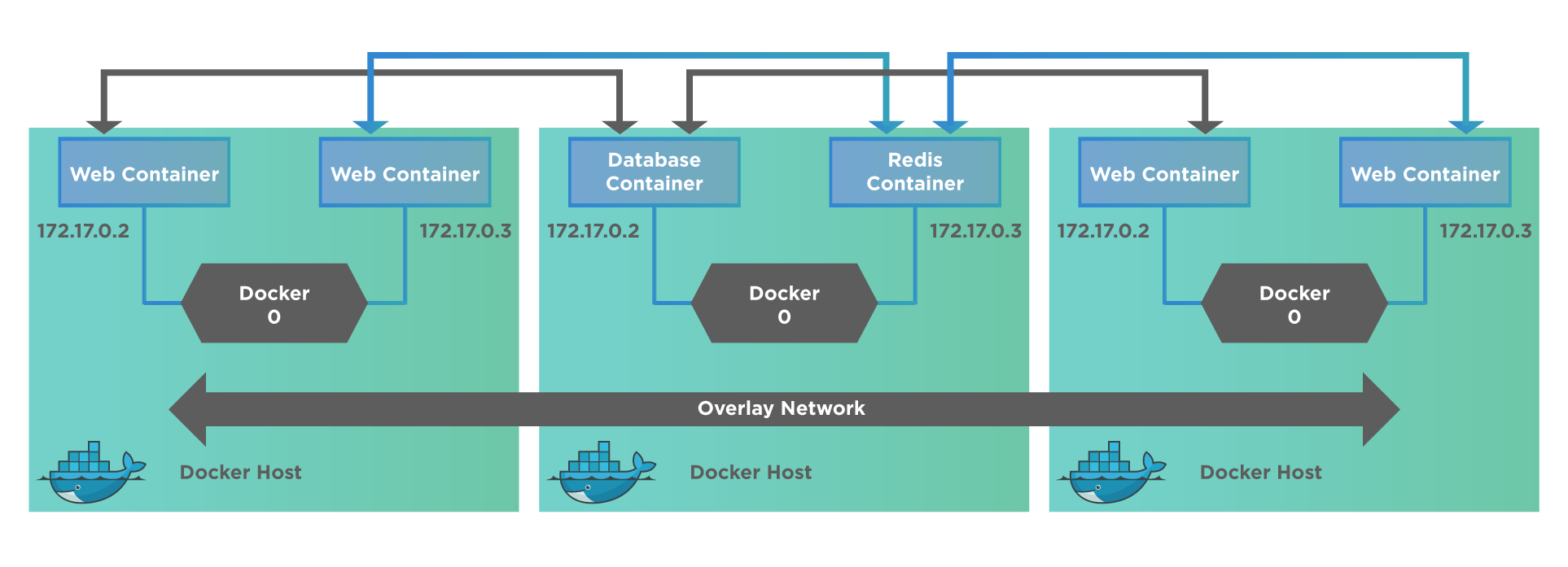

Overlay Network Driver

An overlay network is mostly used with a Swarm cluster. A diagram representing the overlay network is shown below.

An overlay network creates an internal private network spanning across all the nodes in the swarm cluster, thus facilitating the communication between swarm service and a standalone container or even between two containers present on different Docker Daemons. In other words, the Overlay network connects/ links multiple Docker Daemons and enables Swarm services to communicate with each other. We can refer to Networking with Overlay networks for more details.

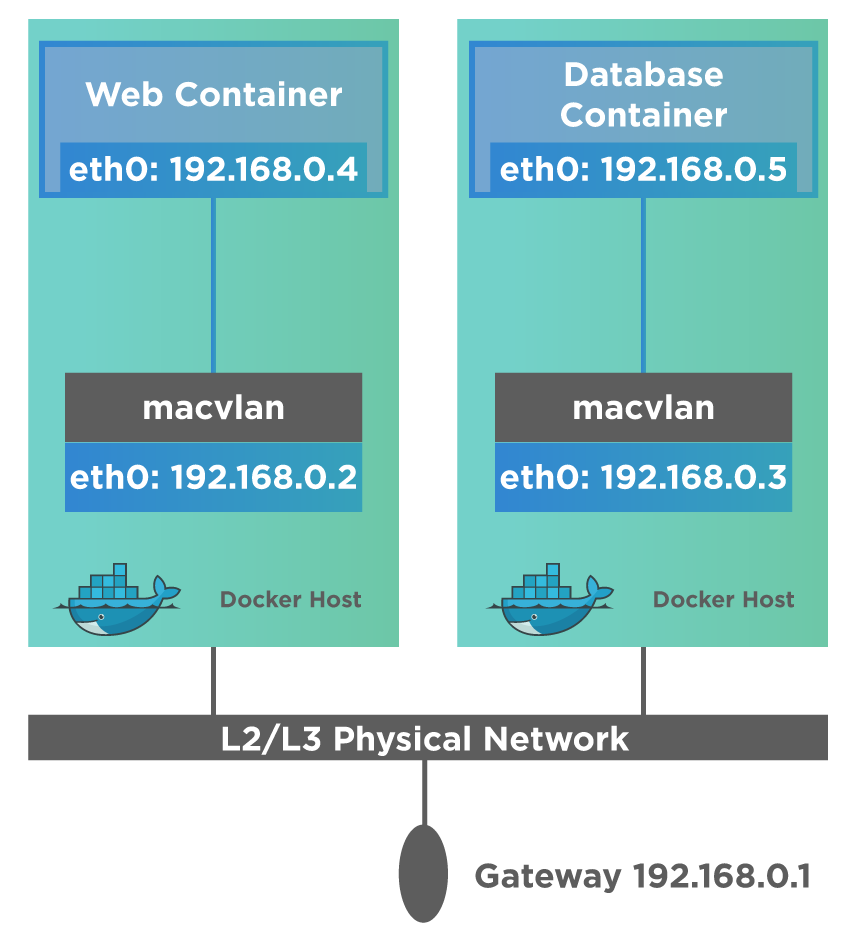

Macvlan Network Driver

Macvlan driver is supposedly the best choice when we directly connect to the physical network instead of routing through the Docker Host's network stack.

The following diagram shows the Macvlan network.

Using the Macvlan network, we can assign a MAC address to a container, which then appears as a physical device on the network. The Docker Daemon can then route the traffic to containers using their MAC addresses. Go through Networking with Macvlan network for more on Macvlan network.

Basic Networking with Docker

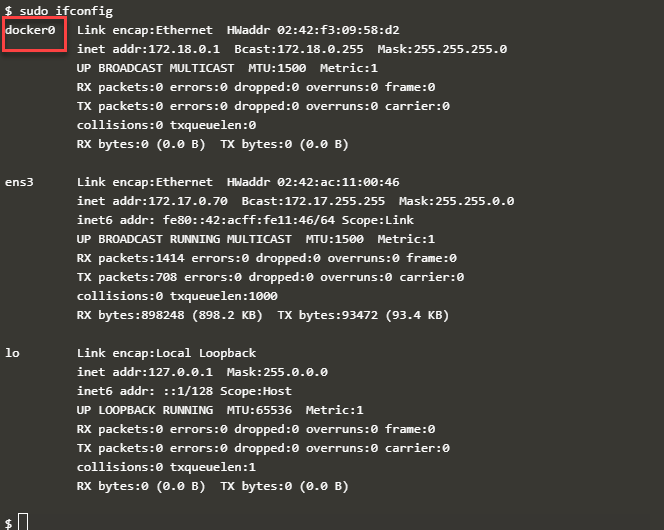

Docker containers communicate with one another as well as with Docker Host so that they can share information, data as well as provide effective services and overall help in the smooth running of applications. This is made possible by Networking for Docker. In fact, Docker creates an Ethernet Adapter when it is installed on the host. So, when we executed the 'ifconfig' command on the Docker host, we can see these Ethernet Adapter details. This Ethernet Adapter takes care of all the networking aspects for Docker.

The execution of ifconfig on the Docker host produces the following output.

The above screenshot shows the Ethernet Adapter 'docker0' on the docker host. Let us now discuss the basic networking commands used in Docker.

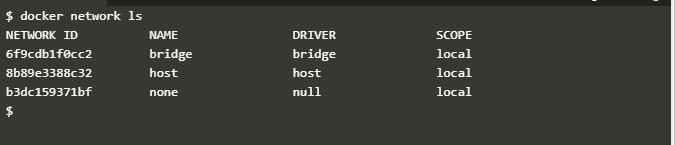

docker network ls command

The 'docker network ls' command has the following syntax:

docker network ls

This command produces the following output when executed.

As shown in the above screenshot, the 'docker network ls' command lists all the networks associated with the Docker on the Docker host. This command does not take any arguments.

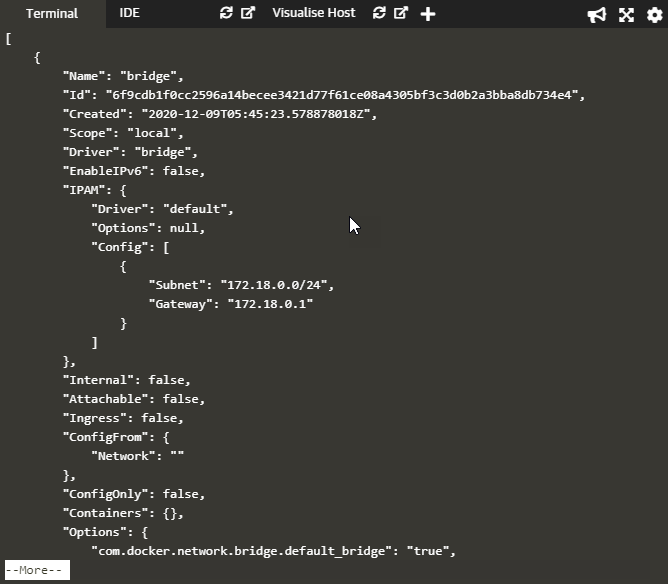

Inspect a Docker network - docker network inspect

The command 'docker network inspect' allows us to see more details about a particular network associated with the Docker. The general/ typical syntax of this command is as follows:

docker network inspect networkname

Here networkname is the name of the network we need to inspect.

The command 'docker network inspect' returns the details of the network whose name is specified as the argument to the command.

For example, the command

docker network inspect bridge

will produce the following output.

As shown, this command generates the details of the bridge network, which is the default network present on the Docker host.

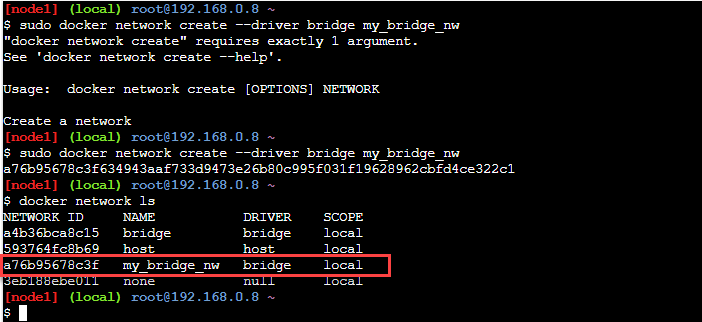

docker network create

We can also create our network in Docker. This is done using the command "docker network create". This command has the following general syntax:

docker network create –-driver drivername name

Here, "drivername" is the name of the network driver used.

"name" is the name of the network newly created.

A new network specified by the 'name' is created on the execution of this command, and a long ID for the network is returned.

Let us create a new network, 'my_bridge_nw', using the following command.

docker network create --driver bridge my_bridge_nw

On execution, this command generates the following output.

From the above screenshot, we can see that the 'docker network create' command returns a long ID of the network 'my_bridge_nw' just created. To verify the creation of the new network, we can fire the command 'docker network ls', and we can see the new network just created listed in its output (red rectangle in the screenshot).

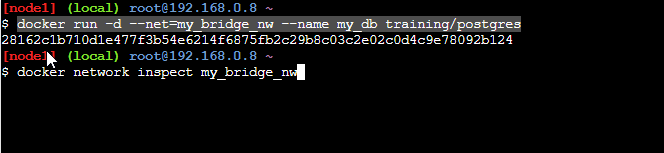

How to connect the docker container to the network?

Once we create a new network, we will need to attach or connect the docker container to this new network. This is done by specifying the 'net' option with the 'docker run' command. Next, we specify the network's name to which this container should be attached as a value for the net option.

Let us execute the following command.

docker run -d --net=my_bridge_nw --name my_db training/postgres

The above command will attach the container 'training/postgres' to the network 'my_bridge_nw'.

The output of the command is shown below.

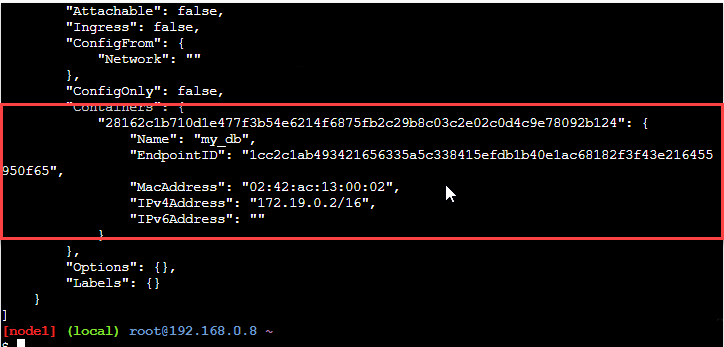

We can see the container is attached to the network now. To verify this, we now inspect the network "my_bridge_nw" as follows:

docker network inspect my_bridge_nw

This command now shows the following output.

The above screenshot shows the container attached to the network (indicated by the red rectangle).

How to use the host networking in docker?

We can directly bind the Docker container to the host network without network isolation. This gives the feel of running the process directly on the Docker host without the container. And in all other ways like process and user namespace, storage, etc., the process isolates from the host.

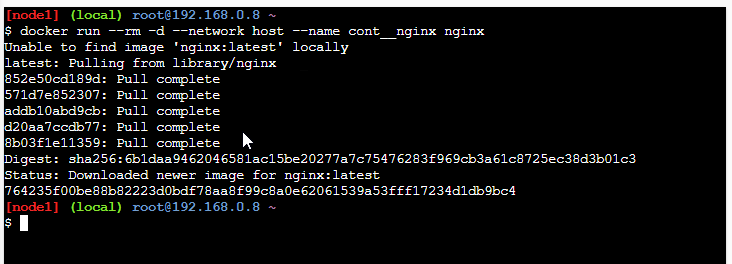

Let us execute the following command.

docker run --rm -d --network host --name cont__nginx nginx

Using this command, we start the container as a detached process. The option 'rm' in the above command removes the container once it stops/exits. Likewise, the '-d' option starts the container in the background (detached).

The output/ result of the above command is as below:

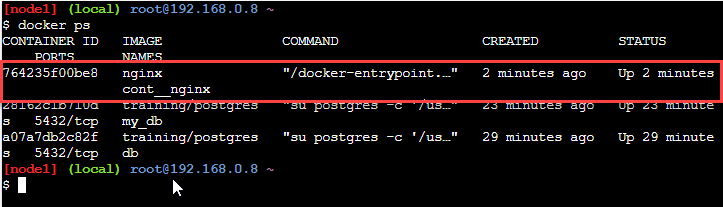

So here we create a new detached container nginx. Now to verify the creation, we can execute the following command.

docker ps

The output of this command shows that the container started in the above command is, in fact, running.

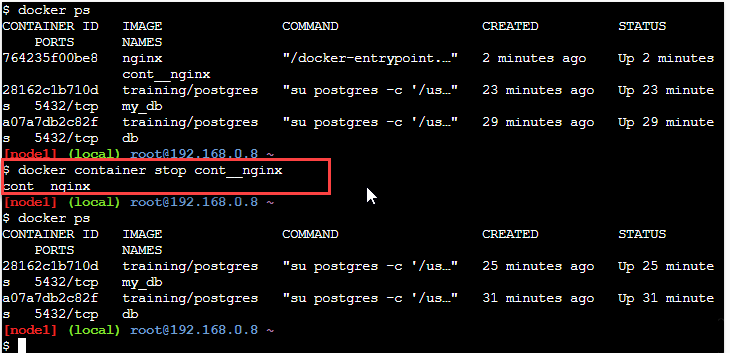

The above running container (shown in red rectangle) can stop using the stop command of docker as shown below.

docker container stop cont__nginx

The output of the command is as below.

In the above screenshot, we have stopped the running container nginx using the above command. Once the container is stopped, it will remove automatically since it started with the -rm option.

Docker Compose Network

We have discussed Docker Compose in our last article. Docker Compose sets up a single network. Each container joins this default network and can be reached by other containers in the network and discovered by these containers at a hostname that is identical to the container name.

Updating containers in the Docker Compose network

We can change the service configuration of containers using the Docker Compose file. So that when we execute the command 'docker-compose up' to update the containers, old containers will remove, and a new container will be inserted. Note that the new container and the old container will have different IP addresses but the same name. The containers will then close their connections to the old container and connect to the new container by looking up for it using its name.

Linking containers

Consider following Docker Compose file (YAML):

version: '2'

services:

web:

build: .

links:

- "db:pgdatabase"

db:

image: postgres

In the above YAML file, the 'web' container has specified an additional alias 'pgdatabase' for the 'db' container using the 'links' tag. This implies the container 'web' can reach the 'db' container either through 'db' or 'pgdatabase' as hostnames. This way, we can link the containers to one another.

Docker Compose Default Networking

For Docker Compose, we can specify the default network that will network or connect the containers or even use a custom network. For networking using custom networks, we can use pre-existing networks or create a new network. Let us now consider a YAML file that has a default network specified.

verision: '2'

services: web: build

ports: - "8000:8000"

db: image: postgres networks:

default: driver: my-nw-driver-1

In the above YAML file, we define a 'default' entry under 'networks'. This specifies that the containers should use this default network for networking.

We can also specify our own networks with the network key and even create more complex topologies, network drivers, etc. We can even use it to connect to external services not managed by Docker Compose. When we have multiple services, each service specified which network to connect to.

Consider the following YAML file as an example of multiple hosts and also multiple custom networks.

version: '2'

services:

proxy:

build: ./proxy

networks:

- my-nw1

app:

build: ./app

networks:

my-nw1:

ipv4_address: 172.16.238.10

ipv6_address: "2001:3984:3989::10"

- my-nw2

db:

image: postgres

networks:

- my-nw2

networks:

my-nw1:

# use the bridge driver, but enable IPv6

driver: bridge

driver_opts:

com.docker.network.enable_ipv6: "true"

ipam:

driver: default

config:

- subnet: 172.16.238.0/24

gateway: 172.16.238.1

- subnet: "2001:3984:3989::/64"

gateway: "2001:3984:3989::1"

my-nw2:

# use a custom driver, with no options

driver: my-custom-driver-1

In the above YAML file, we have created two custom networks, my-nw1 and my-nw2. We see that the service 'proxy' will not connect to service 'db' as they do not share a network. But the service 'app' connects to both networks, which means it can connect to both the service 'db' and 'proxy'.

If we want to access any pre-existing networks, we should use the 'external' option below.

version: '2'

networks:

default:

external:

name: pre-existing-nw

So, in this case, Docker Compose will connect the containers to the 'pre-existing-nw' network and not to a default network.

Key TakeAways

- Docker installs an Ethernet Adapter (Docker0) when it installs on a Docker host. This adapter takes care of networking for Docker.

- There are various network drivers that Docker supports using which we can network the container. Popular ones are Bridge, Host, Overlay, Macvlan, and None. The bridge is the default network for docker.

- Docker supports the 'network' command, which provides us various operations like the listing of networks present on the host, creating a new network, inspecting a network, etc.

- We can attach any container to a network using the 'net' option of the docker run command.

- Similarly, we can run a container as detached using the -rm option of the 'docker run' command.

- When we finish networking, we can disconnect a container from the network.